k8s环境搭建

- 官方地址

- 系统环境

- 架构图

- ip规划

- 环境准备

- 安装kubeadm、kubectl、kubelet

- 安装docker

- 测试

官方地址

关于k8s是有什么用的,请看官方文档的介绍了

相关的概念了解一下

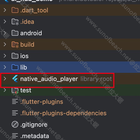

从这张图中,我们可以知道左边的是控制右侧各种node节点

左而我们可以理解为管理者

就像公司一样,公司管理层(master),各个工厂,门店(node)节点

这里的cm就是管理者controller manager

etcd相当于档案局,存储配置内容

而sched则是调度者,api相当于秘书办差不多。

其他的节点join到master上,大概可以理解为注册到master上,这样子就可以被调度了。

节点1你给我上线一个XXX,节点2你给我上线一个XXX

大概是这么个情况,当然,这里的东西又是通过docker去部署的。这里的node在实际中应该对应的是一台服务器。服务器里有docker,k8s在docker对容器进行管理,当然对于k8s来说,最小的管理单位是pob,pob里可以管理着多个容器。

系统环境

我平时使用ubunt比较多,所以这里的宿主机系统我就使用ubuntu-server版本了

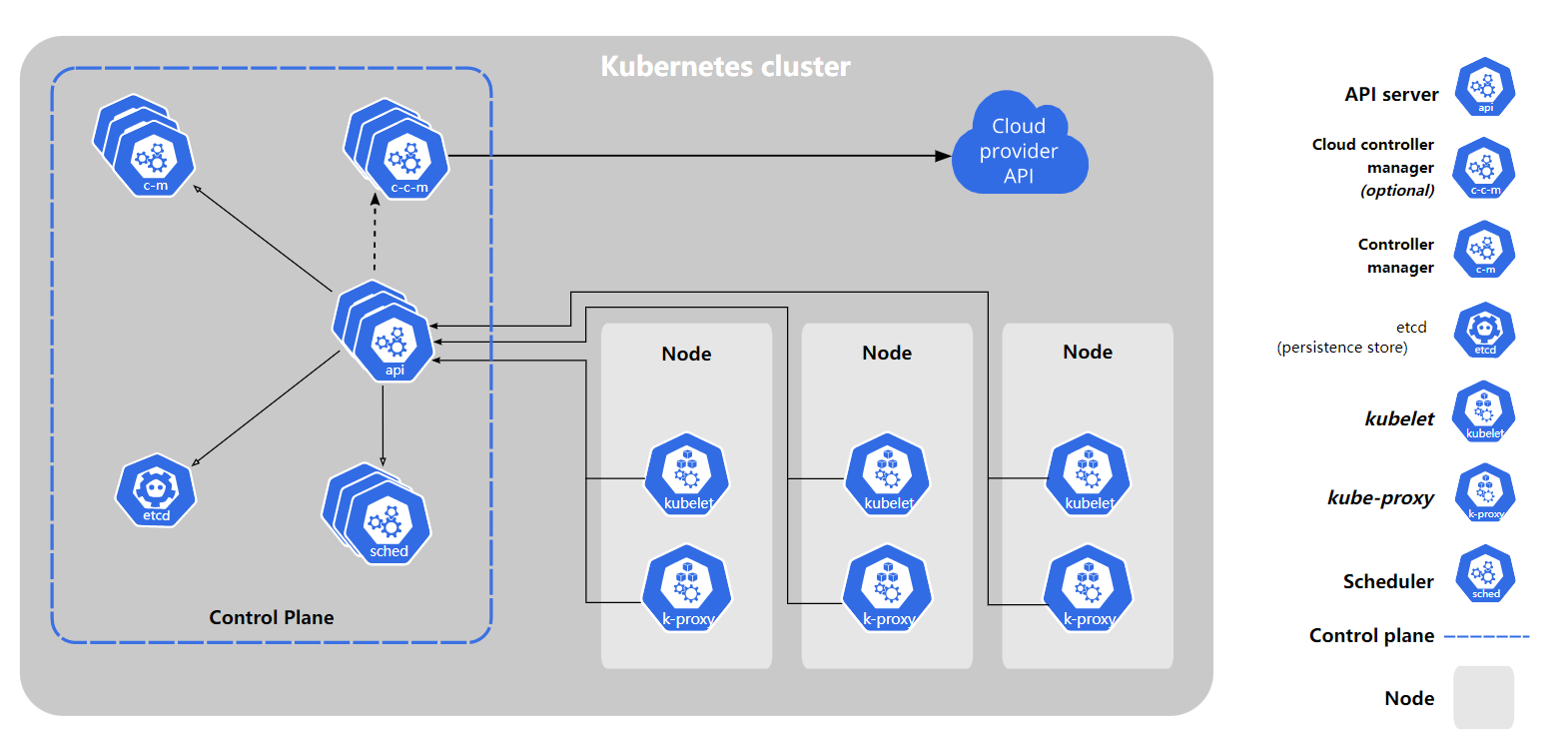

架构图

这篇文章,目标是把博客系统通过k8s来部署。我们先看看要部署成啥样子。

这是三台服务器的结构,一主二从。

当然,实际生产环境中,多个master,多个node

然后具体的应用我们不需要管它部署到哪个节点上了,由k8s去完成吧,我们只管发布就行。

那我们要部署什么东西呢?

- redis

- nginx

- mysql

- web程序

- 前端程序

- 前端管理系统

IP规划

要确服务器之间可以通讯,在实际生产中,我们通常会购买同一个区域的服务器,然后通过内网IP进行相互访问,不需要流量,而且速度很快。

在我们购买服务器的时候,一般会添加到一个网路组里的,也就相当于一个局域网。在这个局域网内,它们可以相互访问。

我现在还没有钱,暂时不购买先,下次部署我们摸鱼君的时候,我就直接通过按量付费就可以购买来录课程了,哈哈。

我这里的ip规划是

环境准备

我这里使用的是ubuntu18.04

我们需要准备一些环境,比如说确保安装上了ssh,远程连接嘛。当然,这个默认应该就有了,你使用服务商提供的系统镜像,一般默认就有了。还要开放一下安全组里的端口。

允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

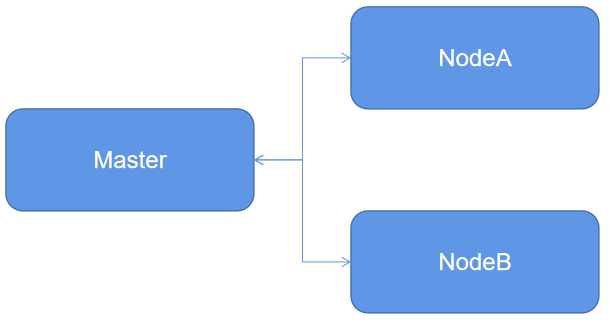

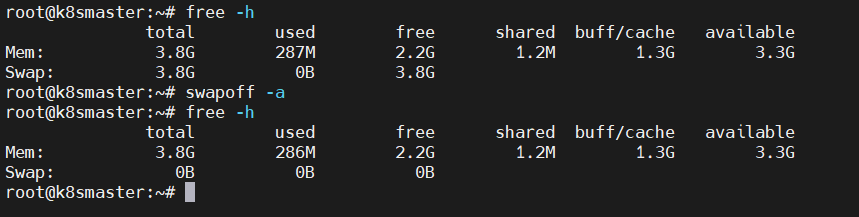

禁止swap分区

在ubuntu下,我们直接编辑

vi /etc/fstab

重启可以生效,如果我们现在要禁止swap可以这样子

在子节点添加hostname映射

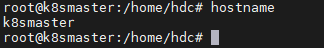

我们这里的host机器的hostname设置一下

手动设置hostname,临时有效

hostname k8smaster

长久有效的设置

hostnamectl set-hostname k8smaster

修改host映射

路径:/etc/hosts文件

192.168.169.129 k8smaster

192.168.169.130 k8snodea

192.168.169.131 k8snodeb

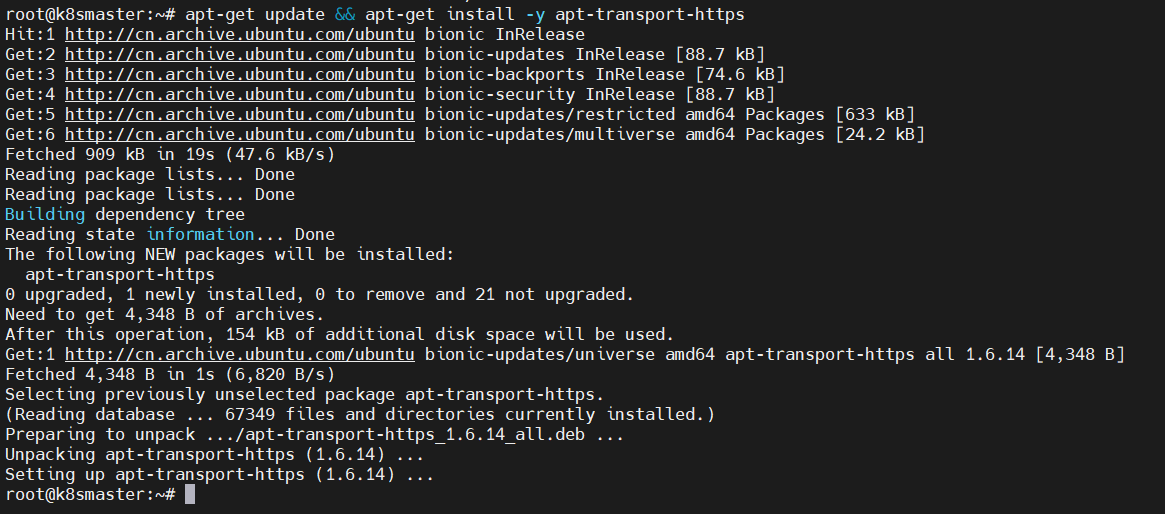

安装kubeadm、kubectl、kubelet

在官方的文档上,安装使用的是google的镜像源,在国内大学数同学是访问不了的。

比如说安装 kubeadm

- 安装https

apt-get update && apt-get install -y apt-transport-https

- 添加秘钥

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

- 设置镜像源

cat << EOF > /etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

- 更新源

apt-get update

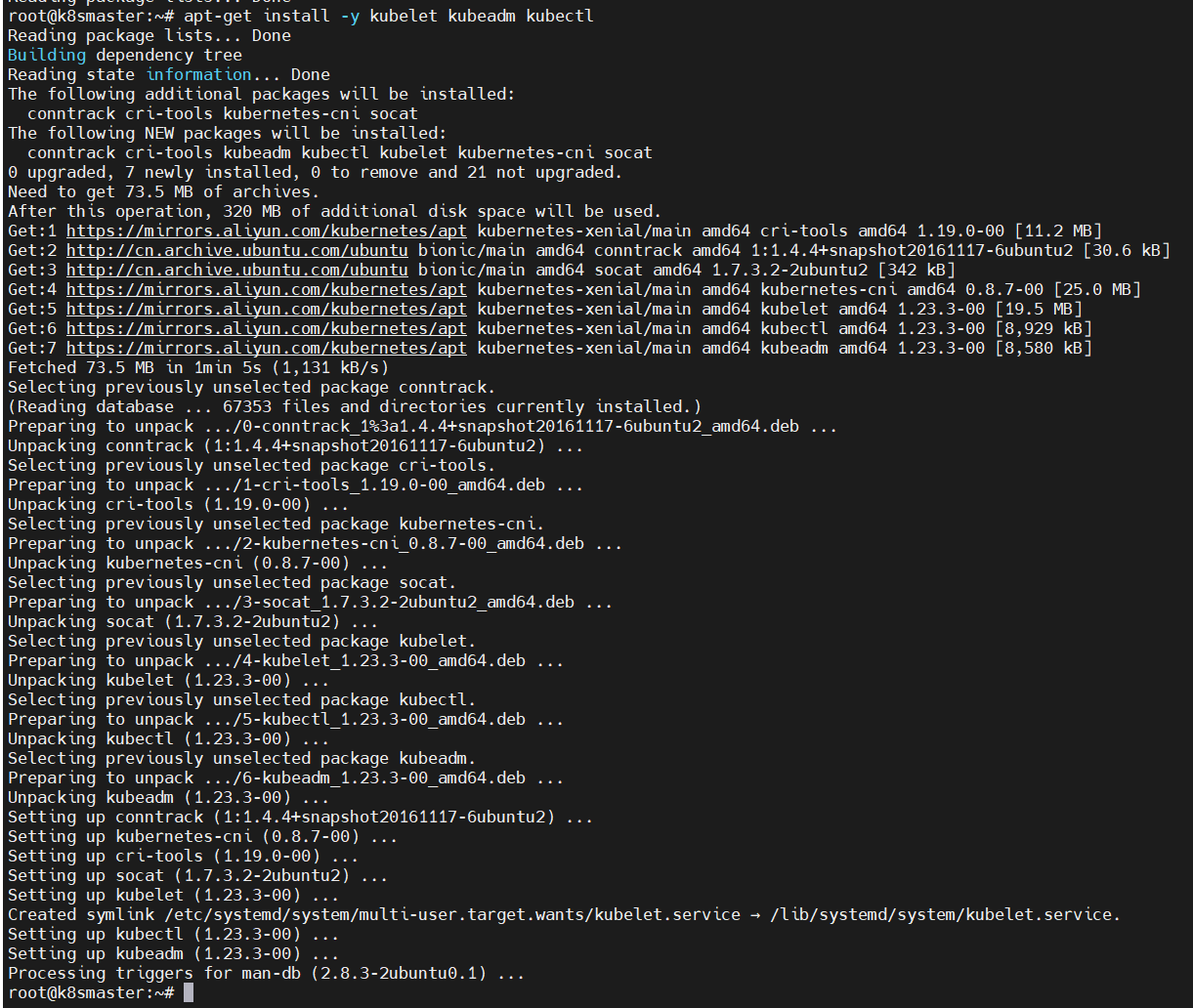

- 安装kubeadm、kubectl、kubelet

apt-get install -y kubelet kubeadm kubectl

安装docker

在ubuntu上安装docker很简单的

apt-get install docker.io -y

修改docker 镜像源

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://mdenjp89.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

大家去自己登录的这个地址去查即可

https://cr.console.aliyun.com/cn-shanghai/instances/mirrors

以上内容,docker的安装,kubelet kubeadm kubectl的安装,在各个机器上都需要做的.

到这里,我们基本上就有了kubelet kubeadm kubectl和docker了

创建主节点(master)

在主节点机器上,我们执行

kubeadm init \

--apiserver-advertise-address=192.168.169.129 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.3 \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=192.167.0.0/16

这里的kubernetes-version版本,如果报错了,就用报错提示的吧,毕竟版本会更新的。

apiserver-advertise-address=192.168.169.129,这个参数,也可以hostname代替,一样的

service-cidr的ip段,掩码是16位,也就是变后面两个数字,也就是10.1.x.x

pod-network-cidr=192.167.0.0/16 不同的pop间要通讯,他们会自动有一个ip的,这个ip段我们也是16位掩码,那么就有65536-2个ip数量可用

这个ip要与flannel插件里的ip对应

flannel是用来给Kubernetes设网络规划服务是让集群中的不同节点主机创建的Docker容器都具有全集群唯一的虚拟IP地址。

如果出现这样的log

[kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp 127.0.0.1:10248: connect: connection refused.

[kubelet-check] It seems like the kubelet isn't running or healthy.

[kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp 127.0.0.1:10248: connect: connection refused.

[kubelet-check] It seems like the kubelet isn't running or healthy.

[kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp 127.0.0.1:10248: connect: connection refused.

解决

创建配置文件/etc/docker/daemon.json

{"exec-opts": ["native.cgroupdriver=systemd"]}

我当前用户已经是root用户了

重新加载配置文件

systemctl daemon-reload

systemctl restart docker

systemctl restart kubelet

kubeadm reset

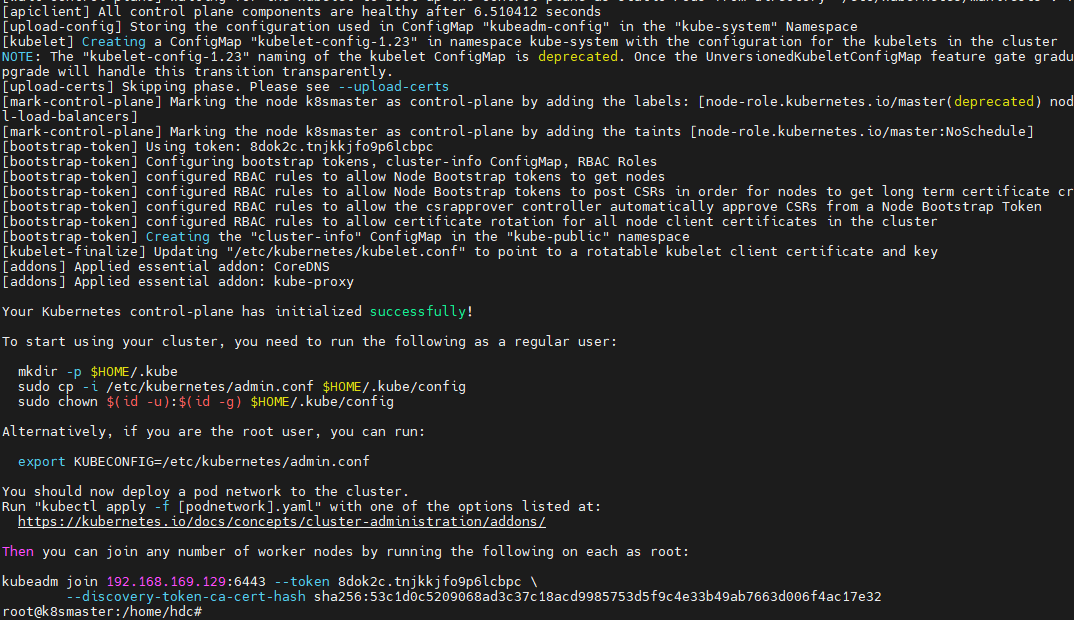

主的节点master初始化成功

可以看到,最后一句是

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.169.129:6443 --token 8dok2c.tnjkkjfo9p6lcbpc \

--discovery-token-ca-cert-hash sha256:53c1d0c5209068ad3c37c18acd9985753d5f9c4e33b49ab7663d006f4ac17e32

当然,这个token的有效期是24小时。如果过期了,通过以下指令重新生成即可

kubeadm token create --print-join-command

也就是说,我在其他子节点,执行这句命令

kubeadm join 192.168.169.129:6443 --token 8dok2c.tnjkkjfo9p6lcbpc \

--discovery-token-ca-cert-hash sha256:53c1d0c5209068ad3c37c18acd9985753d5f9c4e33b49ab7663d006f4ac17e32

就可以加入到主节点里来被管理

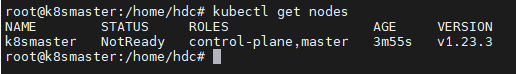

加入之前,我们可以先看看目前有的节点

只有一个master

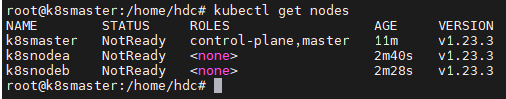

然后我们在节点A上执行前面的join,当然,前提是已经装好了前面的共同环境。也就是说,有kubeadm了。

节点加入成功

到这里,已经提示了,我们可以在master上去查看node了

如果遇到这个问题

The connection to the server localhost:8080 was refused - did you specify the right host or port?

查看一下配置文件是否存在

ll /etc/kubernetes/admin.conf

存在的话,配置一下环境变量

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

让环境变量生效

source ~/.bash_profile

安装Pod网络插件flannel

创建文件 kube-flannel.yml

文件下载路径

https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

需要科学上网

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "192.167.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.0.1 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.0.1

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.16.3 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel:v0.16.3

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.16.3 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel:v0.16.3

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

修改里面的ip为前面我们Init时设置的

net-conf.json: |

{

"Network": "192.167.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

然后安装这个插件

kubectl apply -f kube-flannel.yml

接下来,再装一个可视化的Dashboard,可视化操作。

安装控制面板

文件地址,同样需要科上学网

https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.1/aio/deploy/recommended.yaml

修改一下内容

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30755

selector:

k8s-app: kubernetes-dashboard

---

添加 type: NodePort,和nodePort: 30755

文件内容

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.0.1

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.4

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

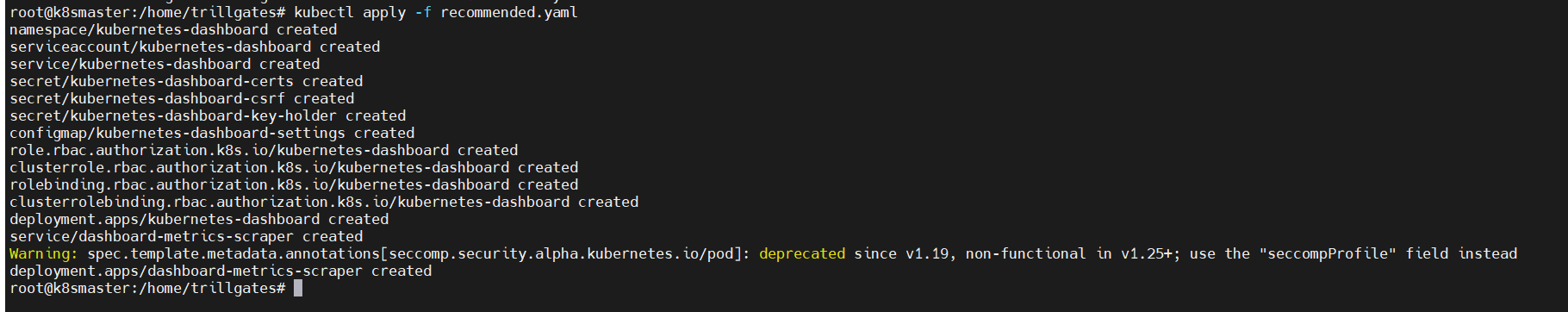

创建文件recommended.yaml

然后安装

kubectl apply -f recommended.yaml

查看一下

get svc -A | grep kubernetes-dashboard

但是我访问不了

访问路径:https://任意节点的ip地址:端口号

原因是啥呢?

https://192.168.220.153:6443/api/v1/namespaces/kube-system/services/kubernetes-dashboard/proxy/

结果:

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "services \"kubernetes-dashboard\" is forbidden: User \"system:anonymous\" cannot get resource \"services/proxy\" in API group \"\" in the namespace \"kube-system\"",

"reason": "Forbidden",

"details": {

"name": "kubernetes-dashboard",

"kind": "services"

},

"code": 403

}

看消息这个字段

权限问题吧,不可以匿名访问

解决:

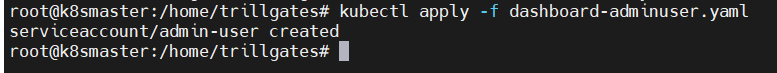

创建admin-user

先创建一个dashboard-adminuser.yaml

内容:

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

应用

kubectl apply -f dashboard-adminuser.yaml

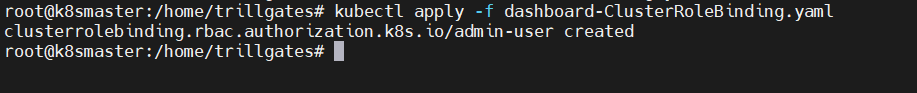

创建集群角色,创建文件dashboard-ClusterRoleBinding.yaml

内容

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

应用

kubectl apply -f dashboard-ClusterRoleBinding.yaml

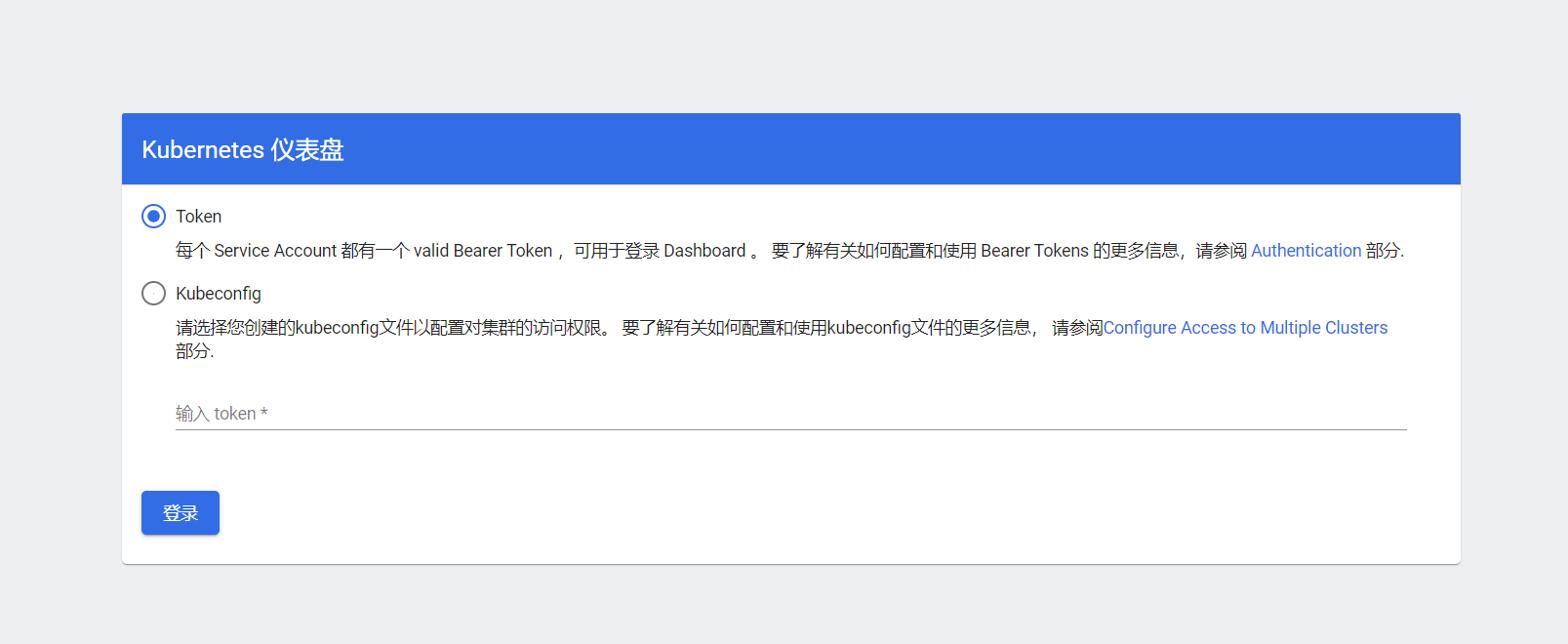

访问

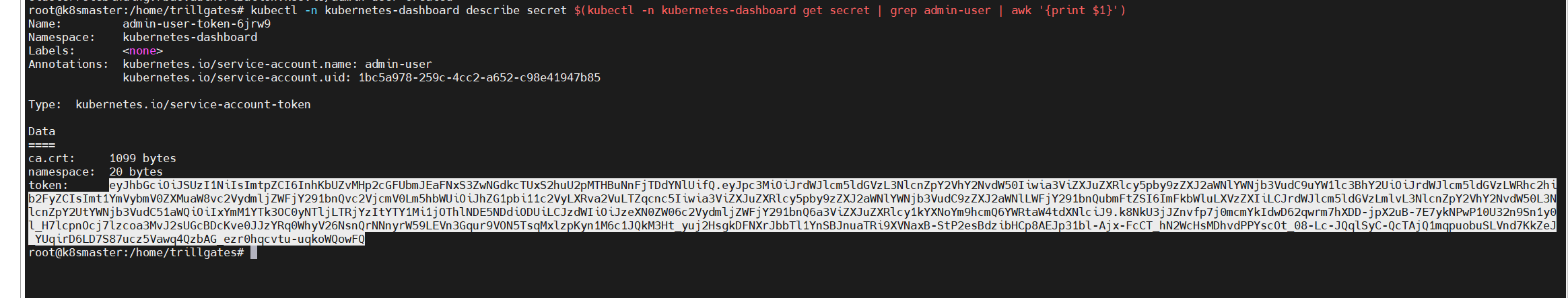

获取token

kubectl -n kubernetes-dashboard describe secret $(kubectl -n kubernetes-dashboard get secret | grep admin-user | awk '{print $1}')

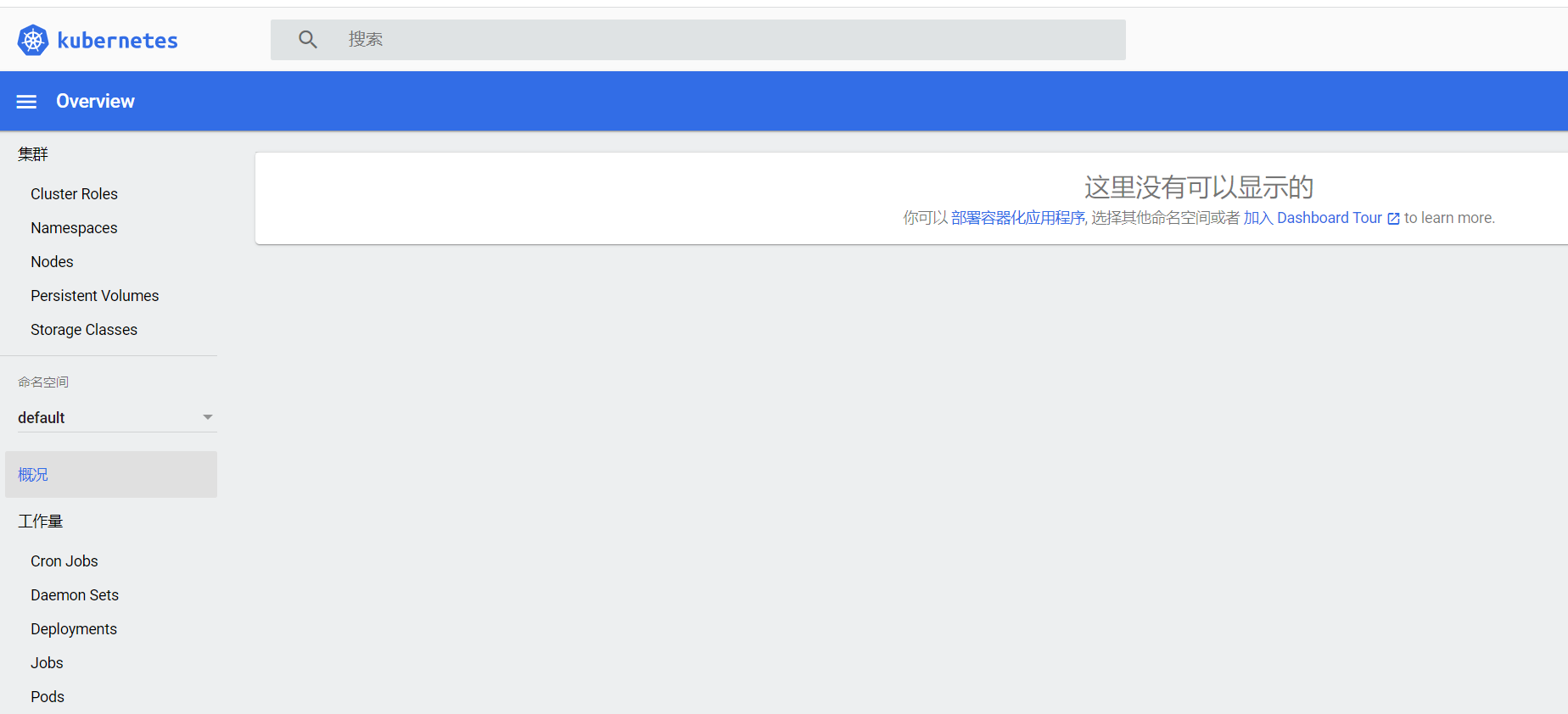

登录成功

到这里我们的环境基本上就有了,下一篇文章我们再去部署程序吧。